An der TUM gibt es einen Kurs, in dem du kein Lehrbuch bekommst. Du bekommst einen Client. iPraktikum bringt Studierendenteams mit echten Industriepartnern zusammen, gibt ihnen ein vages Briefing und drei Monate Sprintzyklen und erwartet am Ende einen funktionierenden Prototyp. Kein Händchenhalten, keine Spielzeugaufgaben, sondern ein Raum voller Leute, die Software bauen müssen, die tatsächlich jemand angefragt hat.

Ich kam mit einem guten Start rein. Ein paar Wochen vorher hatte ich im introduction course RadioAtlas gebaut und irgendwie den ersten Platz geholt. Das fühlte sich großartig an, aber der introcourse ist ein Solo-Sprint. iPraktikum ist ein komplett anderes Biest: acht Leute, ein Coach, ein Industriepartner mit echten Erwartungen und wöchentliche Deadlines, denen egal ist, ob dein Dienstag hart war. Es ging nicht nur um Noten. Wir bauten etwas, das ein Unternehmen wirklich nutzen wollte.

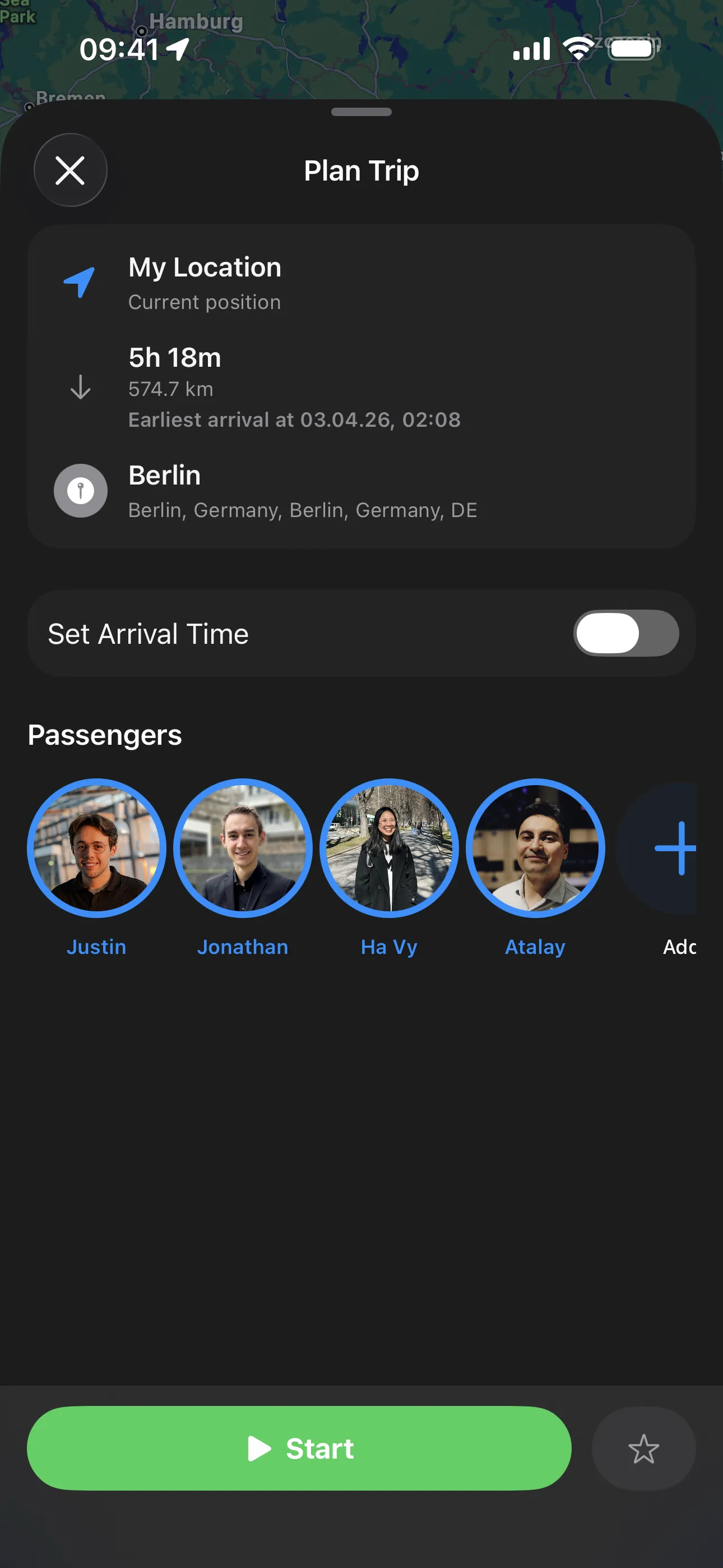

Unser Partner war Quartett mobile, und das Briefing klang trügerisch simpel: Baut einen smarten Roadtrip-Begleiter für iOS. Das waren wir. Die App sollte entlang deiner Route personalisierte Stopps vorschlagen, mit On-Device AI. Keine Server, keine Cloud, keine Daten, die das Handy verlassen. Nur Apple Intelligence lokal auf deinem Gerät, das versucht herauszufinden, wo du und deine Mitfahrer anhalten möchten.

Der Produktname war sideQuest. Das Motto: „Turn Miles into Memories.“ Und für die nächsten drei Monate hielt es uns beschäftigt.

Das Briefing: Turn Miles into Memories

Quartett mobiles Pitch basierte auf einer einfachen Beobachtung: Roadtrips sind zwischen Start und Ziel oft langweilig. Man fährt stundenlang, hält vielleicht an einer Tankstelle, und das Interessanteste ist ein Streit darüber, welcher Podcast laufen soll. Sie wollten eine App, die genau dieses Dazwischen zur eigentlichen Erfahrung macht.

Das Briefing kam mit zwei Persona-Szenarien, die alles geerdet haben, was wir bauten. Die erste Persona war Eve, eine Studentin, die mit zwei Freunden eine Reise von Köln nach Amsterdam plant. Sie wollen spaßige Stopps und unterwegs etwas lernen, mit Ankunftsdeadline um 21 Uhr. Die zweite war Charlie, ein Elternteil mit zwei Kindern im Alter von sechs und zehn Jahren, unterwegs mit der Familie von Köln nach München. Sie erstellen ein Profil, setzen eine Ankunftszeit um 20 Uhr, und die App schlägt Events und kleine Stopps entlang der Route vor und erinnert sie ans Weiterfahren, wenn die Zeit knapp wird. Über die Personas hinaus gingen die optionalen Anforderungen weiter: CarPlay-Integration mit Vorschlägen als Action Sheets auf dem Infotainment-Display und eine Live Activity auf dem Lockscreen mit Distanz und Countdown.

Und dann die Einschränkung, die alles geprägt hat: keine Server. Alle KI-Verarbeitung musste on-device mit Apples FoundationModels framework laufen. Keine Daten verlassen das Handy. Keine API Keys, keine Serverkosten, keine Rate Limits. Komplette Privatsphäre. Das klingt befreiend, bis du merkst, dass ein On-Device-Modell nur einen Bruchteil des Context Windows und der Reasoning-Fähigkeit von etwas wie GPT-5 oder Claude hat. Wir bauten eine KI-App mit etwas, das im Kern ein sehr schlauer Taschenrechner ist.

Das Team fing sofort an, Löcher in die Idee zu bohren. Jonathan wies darauf hin, dass MapKit keine Turn-by-Turn-Navigation oder Sprachführung unterstützt, also zwei Dinge, die Leute von allem erwarten, das irgendwie nach Karte aussieht. Ich fragte nach Akkuverbrauch, weil Foundation Models auf dem Gerät laufen zu lassen nicht gerade sanft zum Handy ist. Christian stellte Fragen zu Zeitbeschränkungen und Routenabweichungen. Und in den Anforderungen steckte diese interessante Spannung: einerseits „no server component“, andererseits „use reliable internet sources to enhance suggestions“. Wir verbrachten einen guten Teil der ersten Woche nur damit zu verstehen, was das Briefing eigentlich bedeutete.

Das Gehirn entwerfen: Die AI Itinerary Engine

Der Kern von sideQuest ist eine 4-Phasen-AI-Pipeline, die frei formulierte Passagierpräferenzen in gerankte, umsetzbare Stopps entlang deiner Route verwandelt. Bis dahin brauchte es Wochen Iteration, dutzende kaputte Prompts und mehr als ein paar Momente, in denen wir uns fragten, ob On-Device AI der Aufgabe überhaupt gewachsen ist.

Phase 0: Passenger Analysis

Jeder Passagier hat ein Freitextfeld preferences. Etwas wie „loves old castles, can't walk long distances“ oder „needs to stretch legs, traveling with a toddler“. Das On-Device-LLM parst das in strukturierte Daten: 5-8 suchbare Keywords pro Passagier plus Dealbreaker. „Loves the outdoors and quiet spots“ wird zu ["park", "forest", "nature_reserve", "viewpoint", "hiking"]. Dafür ist es überraschend gut, sogar on-device.

Dealbreaker funktionieren andersherum: Sie sind harte Filter, die Ergebnisse eliminieren, bevor die AI sie überhaupt sieht. „Wheelchair user“ markiert alle Stopps ohne Accessibility-Daten. „Traveling with a dog“ stuft Indoor-Orte herunter. Das System findet nicht nur, was du willst, es weiß auch, was es vermeiden soll.

Phase 1: POI Search (Das Tool-Calling-Pattern)

Hier wird es interessant. Die AI ruft APIs nicht direkt auf. Stattdessen erzeugt sie einen SearchPlan mit Stadt- und Kategoriepaaren, und die App führt diese Suchen parallel gegen die Overpass API aus. Das ist ein Tool-Calling-Pattern: Die AI entscheidet, wonach gesucht wird, aber die App macht die eigentlichen Netzwerkaufrufe. Bis zu 6 parallele Suchen, jeweils 5 Ergebnisse, verteilt über mindestens 3 verschiedene Städte entlang der Route.

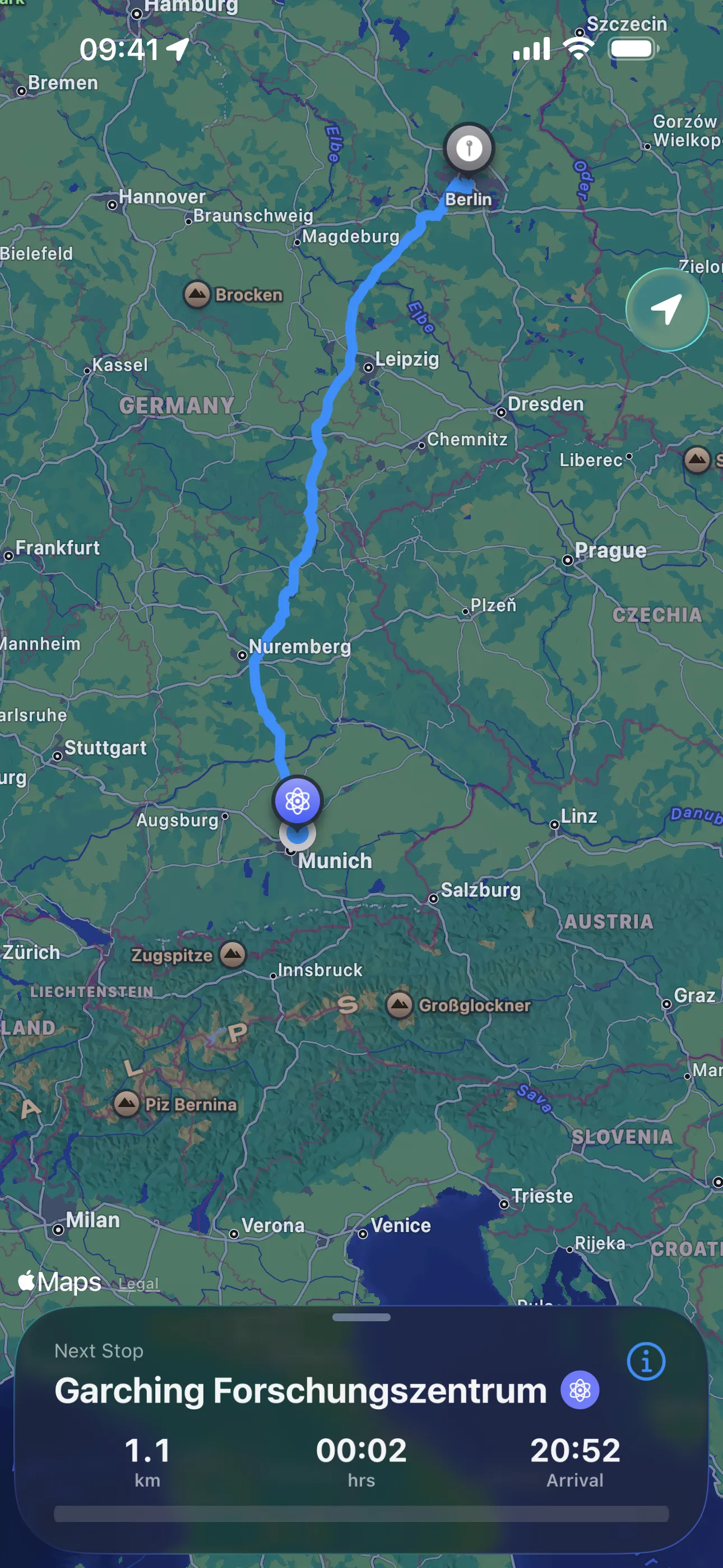

Bevor die AI irgendetwas planen kann, extrahiert die App per Reverse Geocoding 5-8 Stadt-Waypoints aus der Routen-Polyline. Das Sampling-Intervall passt sich an die Reiselänge an: ungefähr 40 km für kurze Trips unter 200 km, hoch bis 100-120 km für Fahrten über 600 km. Dadurch bekommt die AI ein geografisches Vokabular.

Apples @Generable-Macro war zentral, damit das funktioniert. Damit definierst du Swift Structs, die das On-Device-Modell als strukturierte Ausgabe erzeugen kann. Die @Guide-Annotations sind im Grunde Inline-Prompt-Engineering und sagen dem Modell genau, was jedes Feld bedeutet und welche Constraints gelten:

Das Schöne an diesem Pattern ist, dass die Ausgabe der AI schon während der Generierung typgeprüft wird. Wenn das Modell etwas produziert, das nicht zum Struct passt, scheitert es sofort, statt downstream still Logik zu beschädigen. So funktioniert die eigentliche Search Execution mit Swifts Structured Concurrency, um alle Suchen parallel loszuschicken:

Phase 2: Stop Selection and Ranking

Sobald alle Suchen zurückkommen, erhält die AI jeden gecachten POI und wählt die besten 2-3 diversen Stopps aus. Jeder Stopp bekommt einen Score (0.0-1.0), einen Grund, eine vorgeschlagene Dauer und vorgeplante Aktivitäten. Deduplication stellt sicher, dass jeder Stopp in einer anderen Stadt liegt und einen anderen Hauptzweck hat. Maximal ein Essensstopp, keine Wiederholungen.

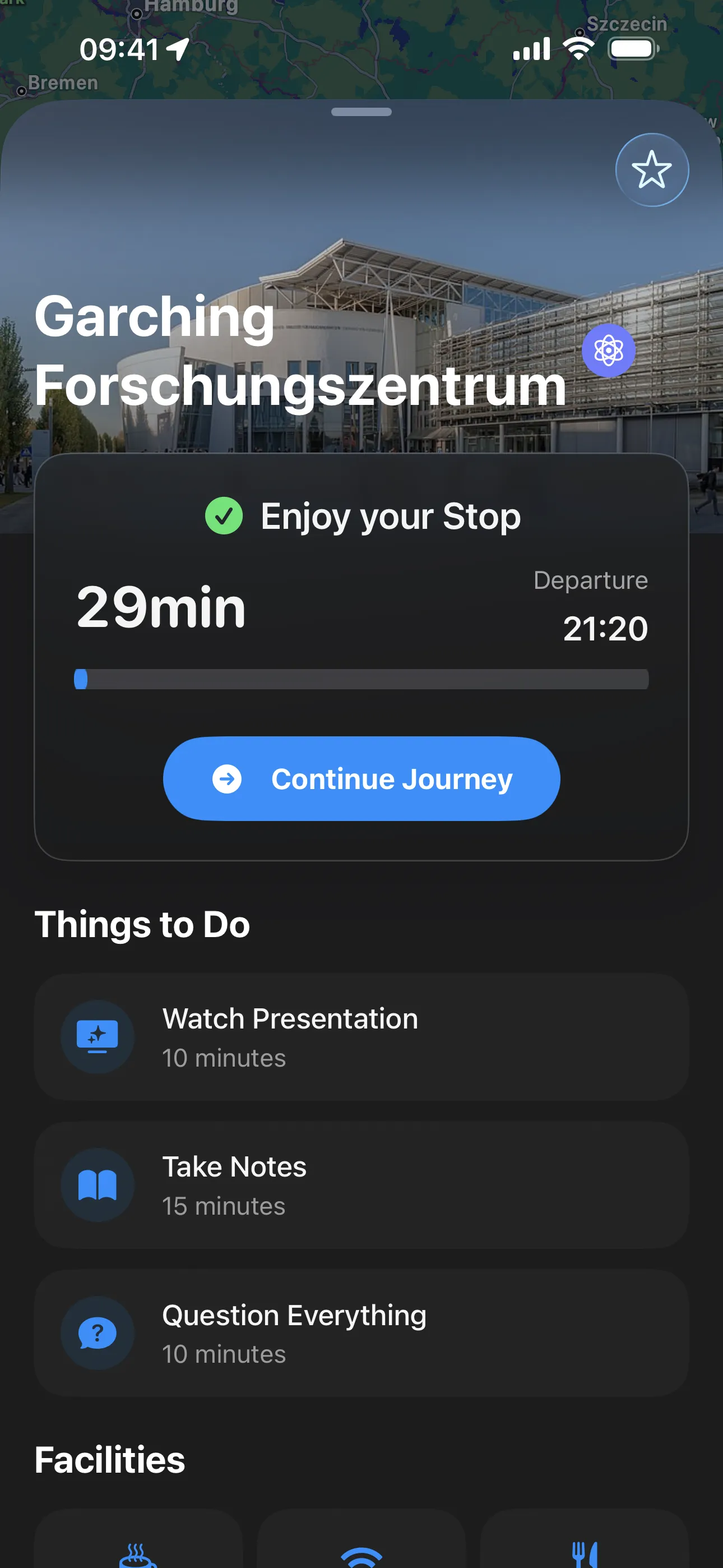

Nach der Auswahl fragt eine Enrichment-Phase nahegelegene Einrichtungen innerhalb von 600 m ab (Toiletten, Parkplätze, WiFi, Rollstuhlzugang) sowie Aktivitäten innerhalb von 1200 m (Museen, Aussichtspunkte, Parks). Wikipedia und Wikidata liefern Beschreibungen und Bilder über drei Fallback-Strategien. Aktivitäten werden priorisiert, Museen oben, Shopping unten.

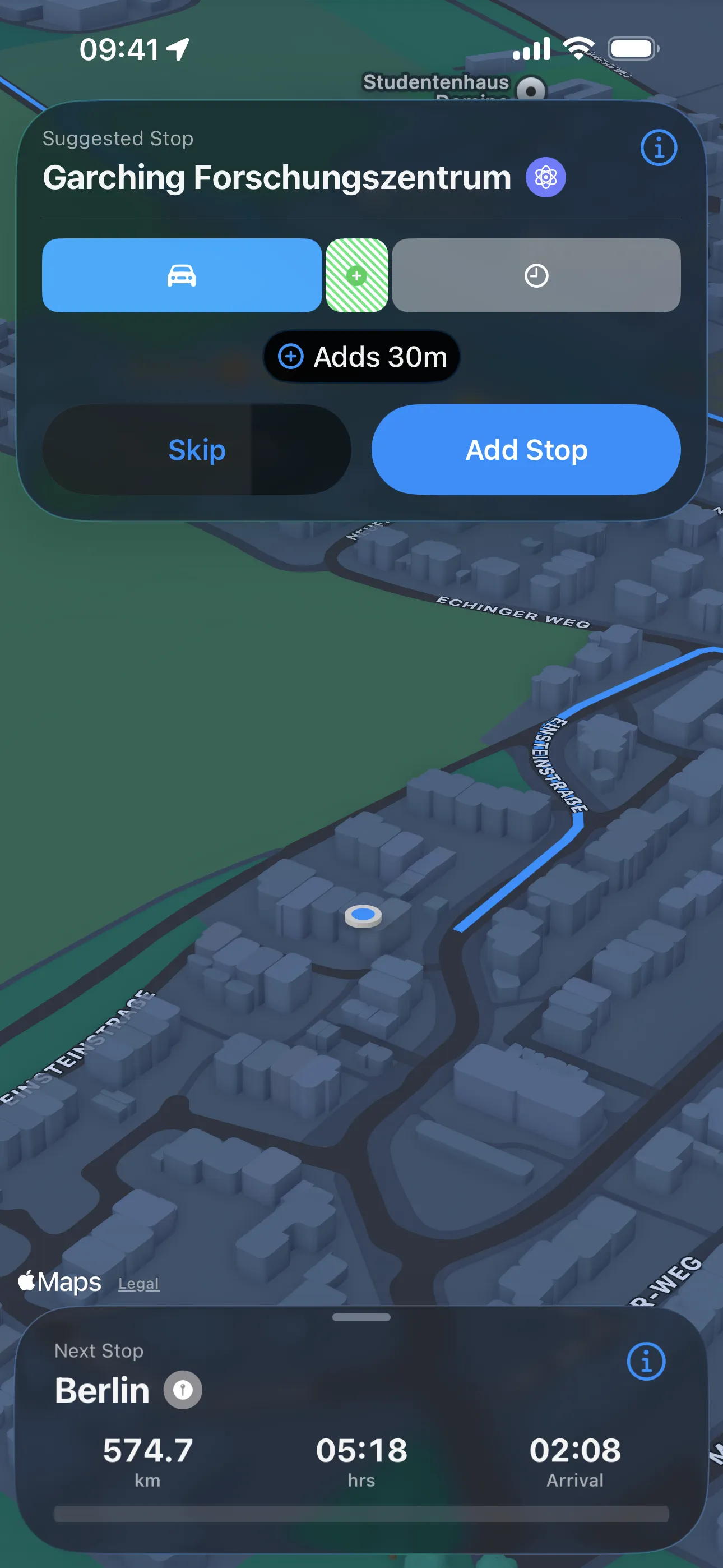

Buffer-Time Monitoring

Die Pipeline hört nach den ersten Vorschlägen nicht auf. sideQuest überwacht laufend dein Zeitbudget. Nach einer initialen Verzögerung von 20 Minuten, damit sich die Route stabilisieren kann, prüft sie alle 10 Minuten. Wenn mehr als 20 Minuten Puffer übrig sind, schlägt sie einen zusätzlichen Stopp vor. Wenn dein Puffer um 30+ Minuten springt, zum Beispiel weil du einen 45-Minuten-Stopp übersprungen hast, löst sie eine komplette Neuplanung mit frischem Kontext aus.

Der Full Stack: Eine Karte, viele Oberflächen

Wenn du sideQuests Codebase öffnest und eine typische SwiftUI-App mit Tab Bars und Navigation Stacks erwartest, wärst du verwirrt. Die gesamte UI ist eine einzige Fullscreen-Karte mit einem Sheet darüber. Kein TabView, kein Router, kein Coordinator Pattern. Nur ein SheetState-Enum, das steuert, welcher View im Sheet erscheint, während die Karte dahinter über .presentationBackgroundInteraction(.enabled) interaktiv bleibt.

Dieses Enum hat neun Fälle, jeder mapped auf einen konkreten View und Presentation Detent. Vom initialen Profile Setup über Search und Ride Details bis zu Navigation, At-Stop-Erlebnissen und Trip Completion ist die gesamte User Journey eine State Machine. ContentView besitzt ungefähr 20 @State-Properties, State fließt über Bindings und Environment nach unten, Events über Closures nach oben. Das klingt schwergewichtig, aber die Single-View-Architektur bedeutet, dass du nie Kontext verlierst. Die Karte ist immer da, immer interaktiv, immer mit deiner Route.

Dual POI System mit Failover

Für POI-Daten bauten wir ein Dual-Source-System. Die Overpass API (OpenStreetMap) gibt uns umfassende räumliche Abdeckung mit flexiblen Tag Queries. Apple Maps über MKLocalSearch gibt uns reichhaltige Metadaten und kuratierte Ergebnisse. Die App fragt Overpass für what exists ab und enriches jedes Ergebnis dann mit Apple-Maps-metadata. Weil Overpass auf Community-Servern läuft, die ausfallen oder dich throttlen können, implementierten wir eine Failover-Kette mit 3 Endpoints: Wenn der Hauptserver scheitert, versucht die App zwei Backups, bevor sie aufgibt.

CarPlay-Integration

CarPlay läuft in einer komplett separaten UIScene, verwaltet von einem eigenen Delegate. Es hat keinen Zugriff auf SwiftUIs View Hierarchy oder @Environment. Kommunikation zwischen Haupt-App und CarPlay läuft komplett über NotificationCenter: Das Handy pusht Trip- und Suggestion-Updates an CarPlay, und CarPlay sendet Suggestion-Entscheidungen zurück. Das Handy ist immer die Single Source of Truth. CarPlay ist read-only. Suggestion Notifications verschwinden nach 15 Sekunden automatisch, weil Fahrer nicht auf Prompts starren sollten.

Live Activities und der SwiftUI-Timer-Trick

Die Live Activity auf dem Lockscreen zeigt deinen aktuellen Stopp, Distanz, Fortschritt und einen Countdown-Timer. Der Timer aktualisiert sich jede Sekunde, selbst wenn die App im Hintergrund ist, und braucht keine Push Notifications. Wie? Eine Zeile SwiftUI:

Das war's. SwiftUIs eingebautes Timer-Rendering erledigt den Rest. Die Dynamic Island zeigt dieselben Daten in vier Surface-Größen: expanded mit vollen Details und Progress Bar, compact mit Icon + Distanz und minimal nur mit Icon. Wenn die App abstürzt, läuft die Activity nach einem 15-Minuten-Stale-Intervall automatisch ab, damit dein Lockscreen nicht ewig Zombie-Daten zeigt.

Software Theatre: Wo Code auf Bühne trifft

iPraktikum hat eine Tradition, die verrückt klingt, wenn du sie nie erlebt hast. Zweimal im Semester muss jedes Team seine Arbeit präsentieren, aber nicht nur mit Slides und Demos. Jedes Team spielt ein Software Theatre: eine geskriptete Szene, live auf der Bühne gespielt, die die App in einem realistischen Szenario demonstriert. Stell es dir wie eine Produktdemo als Kurzfilm vor, mit Dialog, Props und Regieanweisungen. Es gibt zwei Milestones: das Design Review (DR) in der Mitte und den Client Acceptance Test (CAT) am Ende.

Design Review: Die Straße nach Berlin

Für das Design Review spielten unsere vier Schauspieler (Kartik, Cyrine, Raj und Christian) einen Roadtrip von Garching nach Berlin. Raj schlägt vor, sideQuest zur Planung zu nutzen. Cyrine will Natur, genauer gesagt „not a parking lot with one sad tree“. Christian will das Audi Forum in Ingolstadt sehen. Sie richten die App ein, die AI schlägt drei Stopps vor, und sie fahren mit unserem Prop los.

Zu diesem Prop. Wir hatten ein kleines rotes Tesla-Rutschauto. Die Art, mit der ein Fünfjähriger in der Einfahrt herumfahren würde. Wir nutzten es in jeder Bühnenszene als unser „vehicle“. Im Dry-Run-Feedback stand unter anderem: „Nitpicking: clean the Tesla.“ Wir haben den Tesla nicht geputzt.

Nach dem Theatre präsentierten Ha Vy und ich die Architektur. Das Dry-Run-Feedback für unseren Teil war ermutigend: „AOM: Well done! Easy to follow, really good job!“ Das tat gut. Wir hatten viel Zeit damit verbracht, komplexe Entscheidungen auf einer Slide einfach klingen zu lassen.

CAT Theatre: Der Meta-Roadtrip

Das CAT Theatre war ambitionierter. Das Konzept: Die gesamte Präsentation IST ein sideQuest-Stopp. Das Publikum weiß das am Anfang noch nicht.

Szene 1. Corporate Meeting Room. Vier von uns (ich, Ha Vy, Jonathan, Atalay) sitzen über MacBooks gebeugt. Max (unser Project Lead) steckt den Kopf durch die Tür: „Hey, meeting's cancelled!“ Wir klappen synchron alle MacBooks zu. Jonathan: „Finally. I was running out of ways to look busy.“

Ich öffne sideQuest und suche nach einem Trip nach Bielefeld. Keine Ergebnisse. „Ah true, forgot that it doesn't exist“, sage ich. (Für Nicht-Deutsche: Es gibt einen laufenden Verschwörungswitz, dass die Stadt Bielefeld gar nicht existiert.) Ich wechsle zu Disneyland. Aber sideQuest schlägt einen Stopp in... Garching vor. Fünfzehn Minuten entfernt.

Da steht, es gibt eine... laufende CAT-Präsentation.

Ha Vy: „CAT? Also diese Bagger-Dinger?“ Ich: „Nein. Erinnerst du dich nicht, welchen Kurs du dieses Semester gewählt hast?“ Ha Vy: „Warum würde irgendjemand freiwillig auf seinem Roadtrip nach Disneyland zu einer CAT-Präsentation gehen?“

Und dann kam die Zeile, die den größten Lacher des Abends bekam:

Hinweis: Wenn du diesen Stopp überspringst, erhältst du eine 5.0.

Szene 3. Ein Motorengeräusch wird lauter. Die Person auf der Bühne wirkt verwirrt. Und dann gleitet ein Tesla von rechts auf die Bühne. Ich sitze am Steuer. Ha Vy, Jonathan und Atalay laufen hinter dem Fahrzeug her. Ich parke direkt neben dem Pult.

Aus dem Publikum schreit Dima (unser Coach): „You can't park there!“ Geskriptet. Ich halte mein Handy hoch: „According to sideQuest, this is the best entertainment possible.“ Ich tippe auf den Screen. Der atStopView erscheint mit einem 30-Minuten-Countdown. Atalay liest den Abschnitt „Things to Do“ vor: „Watch presentation. Take notes. Question everything.“ Ich inspiziere die Facilities: „That one's... a coffee cup with a question mark? And that one appears to be a crying emoji next to a textbook.“ Atalay: „Accurate.“

Wir setzen uns in die erste Reihe. Der Tesla bleibt die ganze Präsentation über neben dem Pult geparkt. Und am Ende, als der eigentliche Inhalt vorbei ist, kommen wir zurück auf die Bühne. Ich flüstere: „Wait, was this entire thing about the app?“ Ha Vy: „I think this was a presentation about the thing we're using.“ Atalay: „Meta.“

Es war wirklich eines der spaßigsten Dinge, die ich an der Uni gemacht habe. Das Dry-Run-Feedback sagte, es sei „on the risky side“, endete aber mit „they like risks, it's fine“. Das Publikum gab uns Standing Ovations.

Das Team hinter sideQuest

Acht Entwickler, ein Coach, ein Project Lead, ein Industriepartner. Unser Coach war Dima Dmukh, und unser Project Lead war Maximilian Anzinger.

Ich hatte mehrere Rollen. Architektur-Presenter mit Ha Vy beim Design Review. Theatre Actor in beiden Präsentationen und Fahrer im CAT.

Was das Team funktionieren ließ, war nicht der Prozess. Es war die Kultur. Wir hatten wöchentliche Sprints mit einer neuen funktionalen Version pro Woche, GitLab Merge Requests mit automatischer Reviewer-Zuweisung, eine 4-stufige CI-Pipeline (assign reviewers, lint, build, release) und SwiftLint, damit der Code Style konsistent blieb. Wir nutzten XcodeGen, um Projektdateien zu generieren und den klassischen Merge-Conflict-Albtraum mit .xcodeproj-Dateien zu vermeiden.

Aber die Sprints waren nur die Struktur. Die Seele des Teams waren die Dinge zwischen den Deadlines. Bowling-Abende. Billard-Abende, bei denen Dima verdächtig präzise Schüsse spielte. Ein Winterausflug zur Allianz Arena, um den Trailer zu filmen. Aufzug-Selfies mit zwölf Leuten, die in Winterjacken in eine Stahlbox gequetscht sind, Daumen hoch, alle lachen.

Für den CAT hatten wir eigene sideQuest-Trikots. Weiße Shirts und nummerierte Jerseys mit „Turn Miles into Memories“ und sideQuest-Branding. Das Feedback aus den Dry Runs wies immer wieder darauf hin, dass unser Branding „the strongest of all teams“ sei. Das fühlte sich verdient an. Die Jerseys, das Motto, der Tesla-Prop, das Theatre-Konzept. Jedes Stück davon war bewusst gewählt.

Rückblick: Lektionen von der Straße

Drei Monate sideQuest zu bauen gab mir ein deutlich schärferes Verständnis dafür, wo On-Device AI glänzt und wo sie stolpert. Das kleine Context Window bedeutet, dass das Modell repetitive oder generische Vorschläge erzeugen kann. Manchmal bewertet es Präferenzen auf unterhaltsame Weise falsch, zum Beispiel wenn es einer Familie mit kleinen Kindern eine Brauerei vorschlägt. Wir haben das abgefedert, indem wir Waypoints auf 5-8 Städte begrenzten, POI-Ergebnisse auf 5 pro Suche limitierten und immer spezifischere Prompts im SelectionPromptBuilder schrieben. Sehr lange Trips mit detaillierten Passagierprofilen konnten trotzdem einen contextWindowExceeded-Fehler auslösen. Das ist die Decke, an die du mit On-Device-Modellen stößt, und um ein hartes Hardwarelimit gibt es keinen cleveren Engineering-Trick.

MapKit war Segen und Frust zugleich. Es gab uns out of the box eine schöne, performante Karte mit Clustering und Routendarstellung. Aber es unterstützt keine Turn-by-Turn-Navigation oder Sprachführung. Jonathan hatte das in seinen Anfangsfragen markiert, und er hatte recht. Es bedeutete, dass sideQuest Stopps vorschlagen und Routen zeigen konnte, aber nie ein vollständiger Navigationsersatz sein würde. Wir mussten das akzeptieren und darum herum designen.

Die Overpass API war für räumliche Abfragen unverzichtbar, in der Praxis aber unzuverlässig. Communitybetriebene Server geben HTTP 429 zurück, wenn sie ausgelastet sind, oder fallen zu Peak-Zeiten komplett aus. Die 3-Endpoint-Failover-Kette rettete uns bei Demos mehrfach, aber es ist genau die Art Infrastrukturabhängigkeit, die dich bei Live-Präsentationen nervös macht.

Was lief gut? Das Branding. Das Feedback aus jedem Dry Run zeigte darauf: „Beautiful slides, the general slide design is on point.“ „You have such good branding, it is the strongest of all teams, use it more.“ Die Pipeline-Architektur. AI-Entscheidungen von API-Ausführung zu trennen war die beste Designentscheidung, die wir getroffen haben. Und das Theatre, das die App in eine Live-Performance verwob, die gleichzeitig Demo war, machte unsere Arbeit auf eine Weise erinnerbar, die kein Slide Deck geschafft hätte.

Was war schwer? Prompt Engineering für ein On-Device-Modell ist fundamental anders als Arbeit mit Cloud-basierter AI. Du kannst nicht einfach mehr Kontext auf das Problem werfen. Du musst chirurgisch genau entscheiden, welche Informationen das Modell sieht, wann es sie sieht und wie du seine Ausgabe validierst. Das @Generable-Macro half enorm bei der Validierung, aber das Prompt Crafting war pures Trial and Error.

Auf persönlicher Ebene hat mich das Theatre mehr aus meiner Komfortzone geschoben als jedes Code Review. Auf einer Bühne stehen, mit Earpiece, ein Spielzeugauto an einer verwirrten Presenter-Person vorbeifahren, geskriptete Zeilen vor einem Raum voller Leute liefern. Das hätte ich an einer technischen Universität nicht erwartet. Dass es funktioniert hat, dass das Publikum wirklich gelacht, geklatscht und Standing Ovations gegeben hat, war Bestätigung dafür, dass Software nicht wie Software präsentiert werden muss.

Was anders sein könnte

Kein Projekt ist fertig, es ist nur fällig. Wenn wir ein weiteres Semester gehabt hätten, würde ich das angehen:

- Server-side AI als Option. Cloud-basierte Modelle würden größere Context Windows, besseres Reasoning und konsistentere Ausgaben freischalten. Das Problem repetitiver Vorschläge verschwindet weitgehend, wenn du nicht durch On-Device Compute eingeschränkt bist. Ein Opt-in-Cloud-Modus mit On-Device-Pfad als Privacy-First-Fallback wäre das Beste aus beiden Welten.

- Echte Turn-by-Turn-Navigation. MapKits Grenzen bedeuteten, dass wir bei Navigation nicht mit Apple Maps oder Google Maps konkurrieren konnten. Eine Integration mit einem Third-Party-Navigation-SDK oder erweiterte MapKit-Fähigkeiten von Apple würden sideQuest zu einer echten All-in-One-Roadtrip-App machen.

- Real Preference Learning. Die App speist akzeptierte und abgelehnte Vorschläge bereits zurück in den Prompt für die nächste Runde. Mit mehr Zeit könnte daraus ein echter Learning Loop werden, der Präferenzen über mehrere Trips hinweg gewichtet, statt nur in der aktuellen Session.

- Vollständiger Offline-Modus für POI-Daten. Kerndaten der Journey werden persistiert, aber POI Search braucht weiterhin Internet. POI-Daten für eine geplante Route vor der Abfahrt vorzucachen würde sideQuest in Gegenden mit schlechtem Empfang nutzbar machen.

- Wetterbewusste Vorschläge. Wir modellierten Wetterbedingungen in einem

WeatherCondition-Enum, verbanden es aber nie mit einer echten Weather API. Outdoor-Stopps im Regen sind keine gute Empfehlung. - Gruppenprofile. Wiederkehrende Passagiergruppen wie „Family with Kids“ oder „Weekend Friends“ speichern, um sie schnell auszuwählen, statt bei jedem Trip Präferenzen neu einzugeben.

- Apple-Watch-Support. Eine Companion App für At-Stop-Informationen und grundlegende Trip Controls vom Handgelenk.

iPraktikum als Kurs ist anders als alles, was ich an der Uni erlebt habe. Die Kombination aus echten Clients, Sprint-Deadlines, Live-Präsentationen und Teamdynamik erzeugt Druck, den keine Klausur replizieren kann. Du lernst Dinge über dich, die reines Coding nie lehrt: wie du unter Stress kommunizierst, wie du Feedback gibst und annimmst und ob deine Architekturentscheidungen halten, wenn jemand anderes darauf aufbauen muss.

Sechs Teams präsentierten in diesem Semester beim CAT: Bayerische Polizei (Polizeiflottenmanagement), m3 management consulting (Netzwerkmessung mit Apple Watch + CarPlay), Quartett mobile (smarter Roadtrip-Begleiter), Siemens (Maschinensensoranalyse auf iPadOS), Maiß (gamified AI learning) und TUM LifeLong Learning (AI coaching companion). Jedes Team lieferte etwas Echtes. Jedes Theatre war anders. Jede Demo hatte mindestens einen Moment, in dem man Monate Arbeit zu etwas Greifbarem auf dem Bildschirm kristallisieren sah.

sideQuest begann als Briefing darüber, Roadtrips spaßiger zu machen. Es endete als funktionierende AI Itinerary Engine mit CarPlay, Live Activities, zwei POI-Quellen, einem Spielzeug-Tesla und einem Meta-Theatre-Konzept, das die eigene Präsentation in einen Stopp auf der Route verwandelte. Und eine Meta-Präsentation mit so vielen Memes, dass sie unseren Project Lead vielleicht unwohl gemacht hat. Der Code ist fürs Erste fertig. Die offizielle Projektseite findest du auf der TUM-Website.