I was recently accepted into the Generation Developer (GenDev) stipend by CHECK24, a huge honor and an amazing opportunity. But before the celebrations could begin, there was a small hurdle: a comprehensive coding challenge. The task? Build a full-stack, real-time internet provider comparison portal from scratch.

This post is the story of that project, which I called BetterSurf. It's a deep dive into the engineering decisions, architectural rabbit holes, and resilience patterns I used to build a system that not only works but thrives in the chaos of integrating multiple, unpredictable third-party services (ServusSpeed 😡). Let's pop the hood.

The Clown Show of APIs

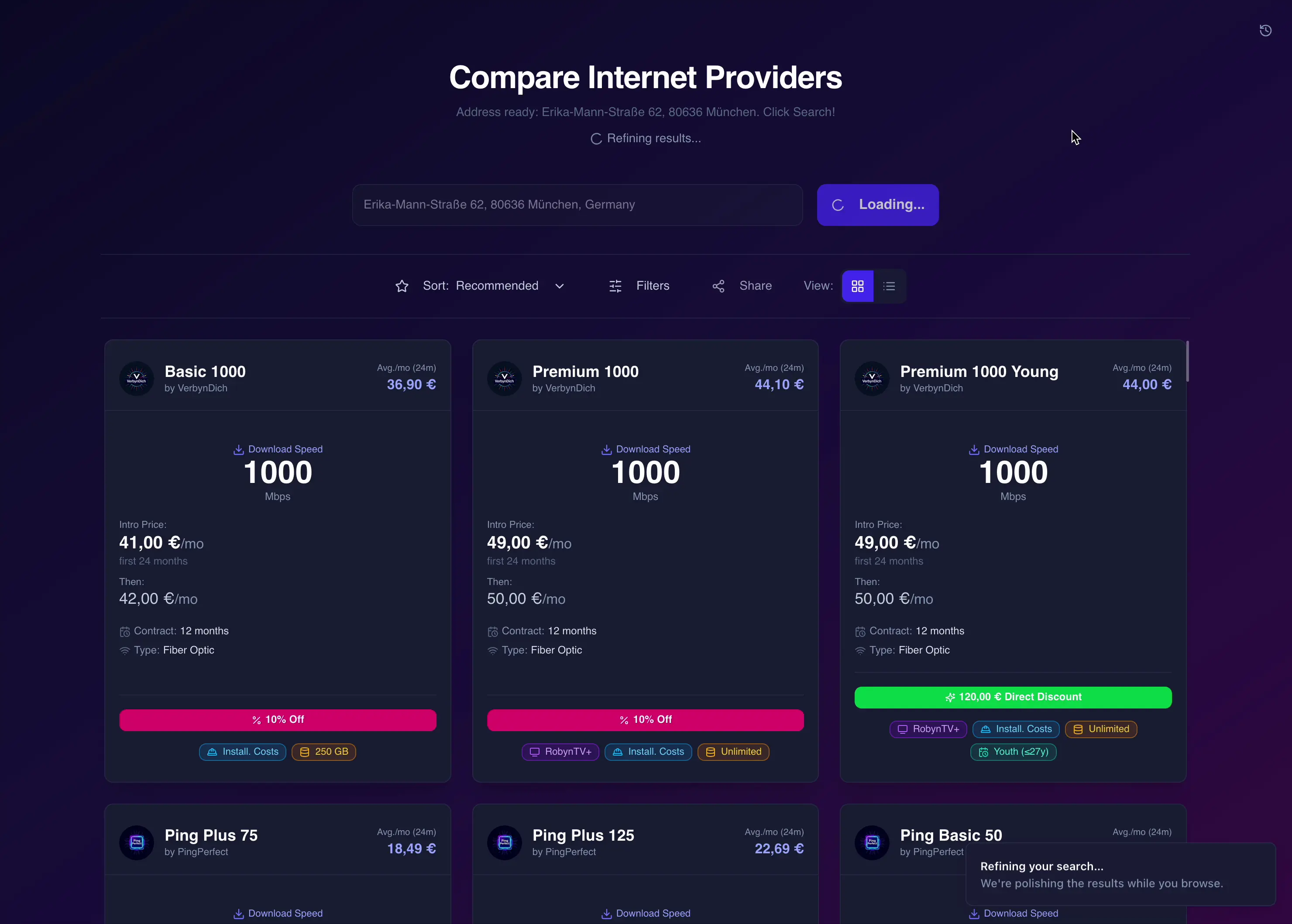

The task was to build a full-stack comparison portal that gathers offers from five different provider APIs and presents them in a user-friendly, real-time interface.

To simulate a real-world scenario, CHECK24 didn't just give me five clean REST APIs. Oh no, that would be too easy. They provided a beautifully chaotic mix of simulated services, each with its own quirks and personality disorders. The real challenge was orchestrating this digital circus:

WebWunder (The Relic)

A legacy SOAP endpoint. This meant dusting off the zeep library and wrestling with WSDL files and XML requests. However, even that wasn't enough, as the API had issues handling the standard format, meaning I had to reconstruct the XML it expected and work around the raw XML. A true blast from the past.

ByteMe (The CSV Spitter)

This REST API decided JSON was too mainstream and returned a raw CSV file. I had to build a mini data pipeline with pandas to fetch, parse, and clean its output. Overall, not that bad. However, the CSV was often malformed or broken. This was solvable with a neat factory pattern. More on that in a bit.

PingPerfect (The Gatekeeper)

A standard REST/JSON API, but with a catch: every single request had to be signed with HMAC‑SHA256. Again, not that bad.

VerbynDich (The Slow Drip)

A paginated REST API that demanded patience. To speed things up, I fetched pages concurrently but used an asyncio.Semaphore to avoid overwhelming it with requests. It became a science figuring out how many requests it could handle at once without throwing a tantrum, sometimes 10, sometimes only 4! Another quirk: it only returned full text. I had to use regex patterns to extract the relevant data, and because the responses varied wildly, I ended up building a sizeable rule set.

ServusSpeed 😡 (The Snail)

A notoriously slow, multi-step API: one call to fetch product IDs followed by parallel requests for each ID. The initial ID list could take up to 15 s, and each detail call another 15 s, plus throttling if you ran more than three at once! Interestingly, when the backend ran on an AWS EC2 instance ServusSpeed sometimes sped up dramatically (2–3 s). However not always. I'll explain my mitigation tricks later.

My Blueprint for Sanity: A Decoupled Architecture

How do you tame that kind of chaos? My answer was a classic decoupled two-tier architecture. On the one side, a zippy Next.js 15 (React 19) frontend, styled with Tailwind CSS and shadcn/ui, handles everything the user sees. On the other, a robust FastAPI (Python) backend does all the heavy lifting. This separation was non-negotiable. It keeps API keys and complex integration logic safely tucked away on the server, leaving the frontend lean, fast, and secure.

Rule #1: Expect Things to Break

When you're juggling five external APIs, things are going to break. It's not a question of if, but when. A core requirement was that one failing provider shouldn't bring the whole app down. So, my first priority was building a multi-layered resilience strategy.

Intelligent Retries with Tenacity

Every external API call was wrapped in my secret weapon: the @tenacity.retry decorator. But this wasn't just a blind "try again" loop. It was a calculated strategy:

This tells the system to try the call up to 8 times. Start with a tiny delay and back off exponentially up to 1 second. This gives a flaky service time to recover. Crucially, it only retries on specific, temporary errors, like network hiccups (httpx.HTTPError) or a custom ProviderError. If we get a permanent error like a 401 Unauthorized, we fail fast instead of wasting time.

The Circuit Breaker Pattern

Retries are great, but what if a service is completely down? Constantly hammering it just makes things worse. To prevent this, I implemented a Circuit Breaker for each provider. Think of it like a circuit in your house:

- Closed (All Good): Requests flow normally.

- Open (Something's Wrong): After 5 straight failures, the circuit "trips" and opens. For the next 5 seconds, we don't even try to call the API. We fail instantly, saving resources and giving the downed service a break. The 5‑second timeout could be increased; however, for demonstration purposes I wanted maximum responsiveness.

- Half-Open (Testing the Waters): After the cooldown, we let a single test request through. If it succeeds, the circuit closes. If it fails, we trip it open again.

The Abstract Provider Pattern

To apply these rules cleanly, I created an abstract ProviderBase class. All the resilience logic (Tenacity, Circuit Breaker) was implemented here. Then, each specific provider class (like WebWunderProvider) simply inherited from this base. This was a lifesaver, it kept my code DRY and ensured every single integration was equally bulletproof without repeating myself.

Herding Cats: Turning Data Chaos into Clarity

If the APIs were a chaotic bazaar, the data they returned was a jumble of mismatched goods. I got inconsistent field names, weird formats, and unreliable values. This is where Pydantic became my best friend, acting as a strict but fair bouncer at the door of my application. I defined a single, unified Offer model that became my source of truth, forcing all incoming data to:

- Get in Line (Strict Types): No more "is this a string or a number?". Every field was cast to its proper type (`int`, `float`, etc.).

- Stop Floating-Point Nonsense: All money was converted to integer cents. This completely avoids the infamous floating-point rounding errors that plague financial calculations.

- Speak the Same Language: Contract durations like "12 months," "1 year," and `12` were all normalized into a clean integer `12`.

- Get Smarter (Computed Fields): The model automatically enriched the data. For instance, if a raw offer had a `tv_package_name`, a boolean `tv_included` field was automatically set to `true`.

- And a lot more,I really put in the work to make sure the parsing and validation are bulletproof.

The Illusion of Speed: Real-Time with WebSockets

Nobody likes staring at a loading spinner. Since I couldn't magically speed up the external APIs (especially you, ServusSpeed 😡), I decided to optimize for perceived performance. The solution was a two-phase loading system over a single WebSocket connection. Here's the trick:

- When a user hits "search," the backend kicks off all API calls in parallel.

- It then waits for the faster providers to respond (or for a 10-second timeout). As soon as this first batch is ready, it streams an

INITIAL_OFFERSpayload to the frontend. - The UI instantly renders these results. The user gets data in seconds! Meanwhile, the backend is still patiently waiting for the slowpokes. When they finally finish, it sends a

FINAL_OFFERSpayload, and the UI seamlessly prompts the user to update with the complete list.

This makes the app feel incredibly fast, delivering value immediately, even if the full data wrangling takes a bit longer behind the scenes.

Going Above and Beyond: The Smart Features

A Truly "Recommended" Sort

I didn't want the default "Recommended" sort to be random. So, I wrote a custom client-side algorithm that scores each offer based on what a real user might value. It calculates a weighted score considering:

- The effective monthly price over 24 months.

- Download and upload speeds.

- Bang for your buck (speed per euro).

- Connection type (Fiber gets a nice boost!).

- Extra goodies like TV packages or youth discounts.

Smarter Input, Less Frustration

To make searching easier, I integrated the Google Places API for address autocompletion. This drastically cuts down on typos. It's the only input method, allowing the user to type the address in any format as long as Google Places can find a matching result. On the security side, the API key is domain-locked to production. I also added a "Recent Searches" feature that remembers the user's last five searches in `localStorage`, letting them review a comparison with a single click. This internally uses the same mechanism as the shareable links (our next topic), meaning the implementation was relatively straightforward.

Spotlight: A Shareable Link That Actually Works

I wanted to build a sharing feature that was easy to use and works reliably. Here's how I did it:

- When a user clicks "share," the frontend sends the search filters, the offer slug (sent by the backend to identify search results), as well as, optionally, an offer key (in case of only sharing a single offer), to a special backend endpoint.

- The backend takes the filters and the slug, compresses them with zlib, and encodes them into a URL-safe slug using base64url.

- It then saves the actual list of offers from that search in Redis, using the slug as the key and setting it to expire in 24 hours.

- When someone opens the shared link, the frontend uses the slug to fetch the exact results from the API, which in turn fetches it from Redis, perfectly recreating the original search.

Ready for Prime Time: Testing & Deployment

A Culture of Testing

A complex system like this is brittle without tests. I used Pytest to build a suite that exercised 100% of files and achieved ~93% line coverage. The key was aggressive API mocking. I used monkey-patching to replace network calls with fake responses. This let me simulate everything: success, weird errors, timeouts, you name it. It was the only way to be sure my resilience logic actually worked. Another huge part of testing focused on the factory logic, making sure that no matter what a provider returned, the factories would either return a valid Offer object or discard the offer, while still trying to fill in as much data as possible even if the output was malformed.

From My Laptop to the Cloud

The backend is fully containerized with Docker and Docker Compose, bundling the FastAPI app, Nginx, and Redis. This stack lives on an AWS EC2 instance where Nginx acts as a reverse proxy. Everything is tucked behind AWS CloudFront for a global CDN and SSL. The Next.js frontend is deployed on Vercel for its killer CI/CD workflow, edge network, and ease of use.

One huge lesson learned here was about state. My first Share implementation used a simple in-memory cache. It worked fine on my machine because, in dev, I used Uvicorn with a single worker. But in production, with multiple Gunicorn workers, it was a disaster! Each worker had its own separate cache, so the Share state wasn't shared. This "aha!" moment led me to adopt Redis (which I initially wanted to avoid because it seemed like overkill since I wasn't allowed to cache results anyway) as a centralized cache, making the entire application truly robust across all workers.

Looking Back: Lessons and Future Plans

This project was a beast, in the best way possible. It was an intense crash course in real-world software engineering.

What I Learned in the Trenches

- Practical API Wrangling: I got my hands dirty with everything from ancient SOAP to modern REST, learning how to tame each one.

- True Resilience Engineering: I didn't just read about the Circuit Breaker pattern; I built it, debugged it, and understood why it's so critical.

- The Art of Real-Time UX: I learned how to use WebSockets to build an experience that feels fast and responsive, even when the backend is working hard. Also, how important it is to sometimes mislead the user about what's actually going on internally. The user doesn't have to know that any API is slow. They will see results and be happy.

- Production Headaches & Cures: I learned firsthand why things that work in development break in production, and how tools like Redis solve real scaling problems.

If I Could Do It Again...

With the benefit of hindsight, there are a few things I'd refine:

- Cleaner API Interfaces: I'd refactor the provider classes to be even more focused on their single responsibility, making them easier to maintain.

- Formal Dependency Injection: Using a DI optimized architecture in the backend would make testing and configuration even more streamlined.

- Hyper-Responsive Fetching: I'd evolve the two-phase loading to a multi-phase system that streams results as soon as any single provider finishes.

The Wishlist for BetterSurf 2.0

Now, if I were actually developing, there are a few things that, in my opinion, would be highly necessary.

- Smarter Caching: While caching was forbidden by the challenge, I'd add a multi-level cache in Redis for both provider responses and full search results.

- Database Integration: Store offers in a PostgreSQL database to track price trends, analyze the market over time, and avoid relying on third-party APIs for results.

- Full-Stack Testing: Add a frontend testing suite with Jest and React Testing Library, plus end-to-end tests with Cypress or Playwright.

- UI/UX Polish: Add features like pagination, a theme switcher, and other refinements based on user feedback.

Known Quirks & Considerations

Now to the less fun part. Of course, there are parts that aren't fully perfect. These could be solved by investing more time, but I judged that given the scope of the challenge these are acceptable trade‑offs. However, one should still be aware of them.

- Minor Visual Gremlins: You might spot an occasional flicker in the UI (like the status bar). It's superficial and doesn't affect functionality.

- Frontend Modularity: Some frontend components could be broken down even further. The current structure is robust, but there's always room for more refinement.

- Google Places API Version: The browser console might hint at updating the Google Places SDK. The current version is fully supported and works perfectly.

- Google Maps SDK Chatter: A harmless, CORS-related warning from the Google Maps SDK might pop up in the console. It's a known diagnostic message that doesn't affect anything.

- Frontend Development: I've noticed that while my frontend development skills were up to par for the challenge, there were a few things I was missing. Often, I wasn't aware of simple solutions in Next.js to problems I'd encountered, which led me to over‑engineer solutions that could have been one line. This also degraded my code style a bit; I'm not quite happy with how the frontend code is architected and looks. However, I'm relatively new to frontend and Next.js. This is definitely an area where I can grow, and this project was perfect for that. What I learned here will be integrated into my future projects, many of those lessons already are.

Final Thoughts

Building BetterSurf was a marathon, not a sprint. It was a rigorous exercise in managing complexity, designing for failure, and polishing a user experience. It solidified my confidence in architecting and building a production-grade application from the ground up. I'm incredibly grateful for the GenDev stipend and can't wait to apply these lessons to the next big challenge. A huge shoutout to the entire CHECK24 team involved with this challenge and making it possible for everyone who competed to test and prove their skills.

BetterSurf pushed my technical and problem-solving skills to their limits. Over the course of this project, I deepened my expertise in API integration, resilience engineering, real-time data streaming, and scalable cloud deployment. I honed my ability to design robust architectures under tight constraints, adapt quickly to unpredictable third-party systems, and deliver a seamless user experience despite backend complexity. This experience not only proved my capability to build production-grade systems from scratch but also strengthened my confidence as a full-stack engineer ready to tackle high-impact, complex challenges in the future.

More Reading

If you're interested in reading more about this project, feel free to check out my GitHub repository, where you can find the full code in all its (sometimes questionable) glory.